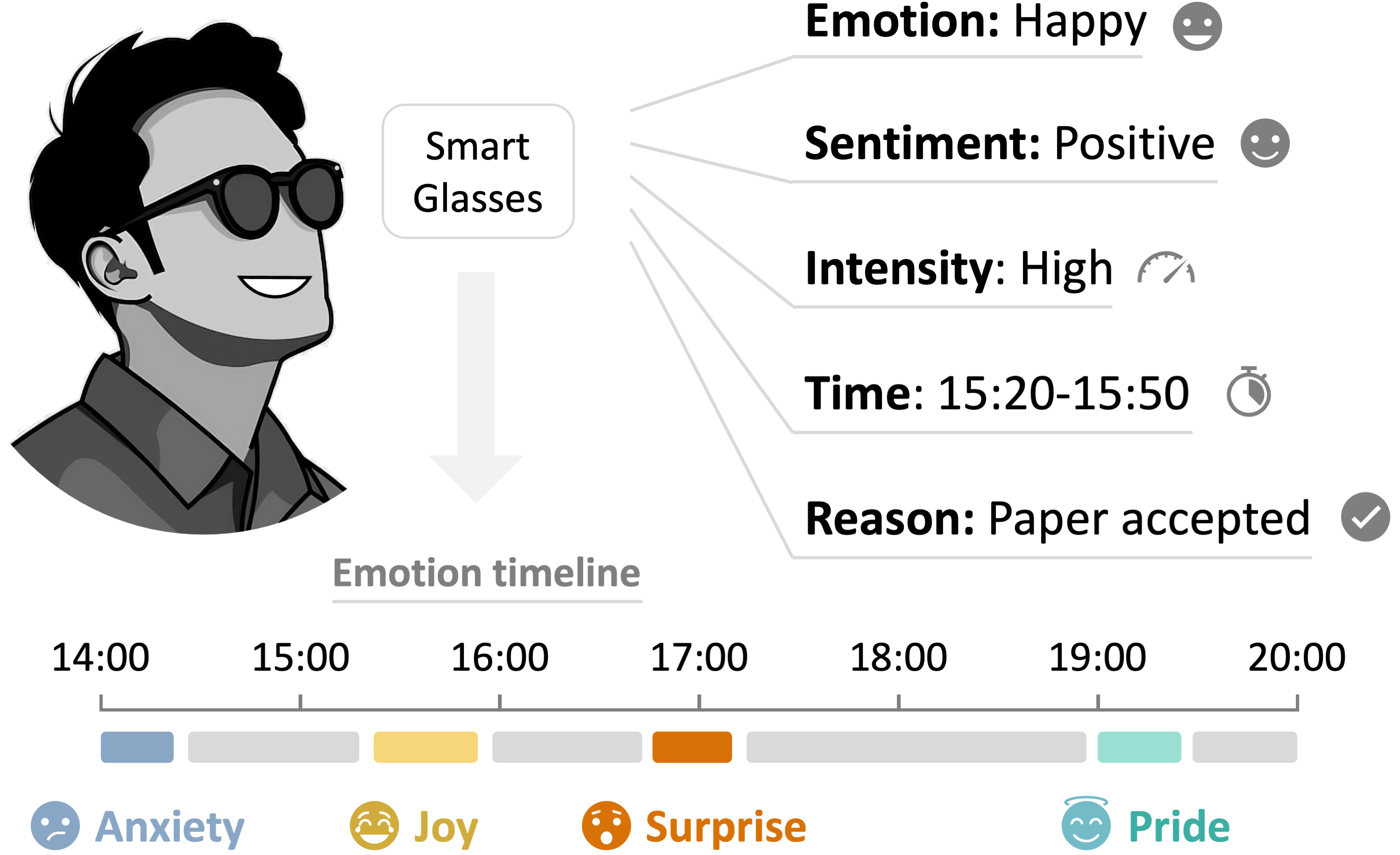

We introduce the novel task of egocentric self-emotion tracking,

which aims to infer an individual's evolving emotions from egocentric multimodal

streams such as voice, visual surroundings, semantic subtext, and eye-tracking signals.

To establish this research direction, we present:

(1) OSMO dataset,

a large-scale annotation effort on 110 hours of existing bilingual smart-glasses recordings,

establishing the largest egocentric emotion dataset and the first with subject-wise emotion timelines;

(2) OSMO benchmark, a suite of five tasks (emotion recognition, sentiment, intensity, localization, and reasoning),

that redefine emotion understanding as a continuous, context-aware process rather than discrete classification of trimmed videos;

(3) OSIRIS, a large multimodal model that tracks emotions over time by reasoning over the user's personal emotion history,

current expressions, and egocentric observations.

Extensive evaluations show that OSIRIS achieves a state-of-the-art performance,

delivering, for the first time, coherent emotion timelines from egocentric data.

Dataset, model, and codes will be fully open-sourced upon publication.